Yet another thing that has bitten me working in SparkSQL in Databricks---this time it's data types.

In SQL Server, a tinyint ranges from 0 to 255 but both of them allow for 256 total values. If you attempt to cast a value that doesn't fit in that range, you're going to raise an error.

SELECT 256 AS x, CAST(256 AS tinyint) AS boom

Msg 220, Level 16, State 2, Line 1 Arithmetic overflow error for data type tinyint, value = 256.

The range for a tinyint is -128 to 127 in SparkSQL - still 256 total values. Docs call it out as well

ByteType: Represents 1-byte signed integer numbers. The range of numbers is from -128 to 127

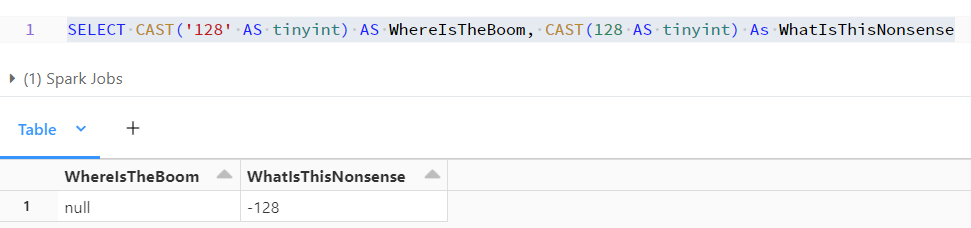

SELECT CAST('128' AS tinyint) AS WhereIsTheBoom, CAST(128 AS tinyint) As WhatIsThisNonsense

Here I select the value 128 as both a string and a number. I honestly have no idea how to interpret these results. A cast from string behaves more like a TRY_CAST but numeric overflows just cycle?

SELECT CAST(129 as tinyint) AS Negative127 is -127.

No comments:

Post a Comment